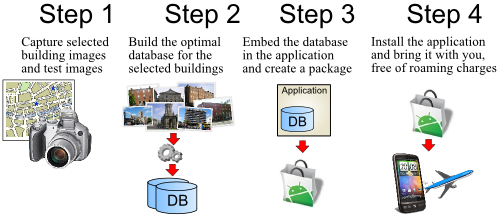

This MSc thesis for Trinity Collge Dublin implements building recognition on mobile devices (Android powered) using captured camera frames, without the need of a network connection to a remote server. A visual feature database used for recognition is stored in the device, requiring it be optimized to reduce the dimension. This optimization is obtained using clustering and genethic algorithms.

The research covers the design and development of a framework for the creation of small visual features database. This database is to be used on mobile devices to perform building recognition on a self-contained "Tell me what I am looking at" application using two inputs: GPS data and camera images.

The main contribution of this approach is exploring the automated creation of a compact local visual features database to be installed on the mobile device. Using a local database is justified by scenarios where a data connection to a remote server is not available or too expensive (e.g. tourists using data

roaming abroad).

Creating a compact database requires a balance between various constraints. The number of visual features in the database will affects both the size of the database on the limited storage of a mobile platform and the computation time of the image matching. However, having a small number of features in the database also results in poor results. This research evaluates the use of a genetic algorithm that selects the best parameters to build the database using visual features clustering.

Marco Conti

Marco Conti

Comments